A platform that publishes public arrest records carries a serious responsibility. The information we display is tied to real people, and that means we have to be deliberate about preventing defamation, harassment, and misuse. This article explains the specific safeguards we've built into America's Top Mugshot — not vague promises, but the actual protections that are live on the platform right now.

The Rating Floor: Why No Score Drops Below 5.0

The most visible anti-defamation protection on our platform is the rating display floor. If a mugshot's average rating falls below 5.0, it is shown as 5.0 to all visitors. The actual rating is stored accurately — we never alter the data itself — but the public-facing score never drops below a neutral midpoint.

Why does this matter? Without a floor, a small group of users could coordinate to give someone the lowest possible score, effectively turning our rating system into a tool for public humiliation. A person featured on our platform has already been through an arrest. Piling on with rock-bottom scores serves no informational purpose — it's just cruelty.

The floor prevents that. Even in a worst-case scenario where someone is targeted with a flood of low ratings, the number the public sees never drops into territory that reads as a personal attack. For a full explanation of how scores are calculated and protected, read our article on how ratings work on our platform.

One Vote Per Person, 10 Per Hour

Every user gets exactly one rating per mugshot. You can change your vote anytime, but you can't stack multiple votes to inflate or deflate a score. This rule is enforced automatically — there is no way around it, even if someone creates a script or uses an automated tool.

On top of that, users can submit a maximum of 10 ratings per hour. This prevents anyone from rapidly rating dozens of profiles to push scores in a particular direction. Under normal browsing, you'd never hit this limit. It exists entirely to stop abuse.

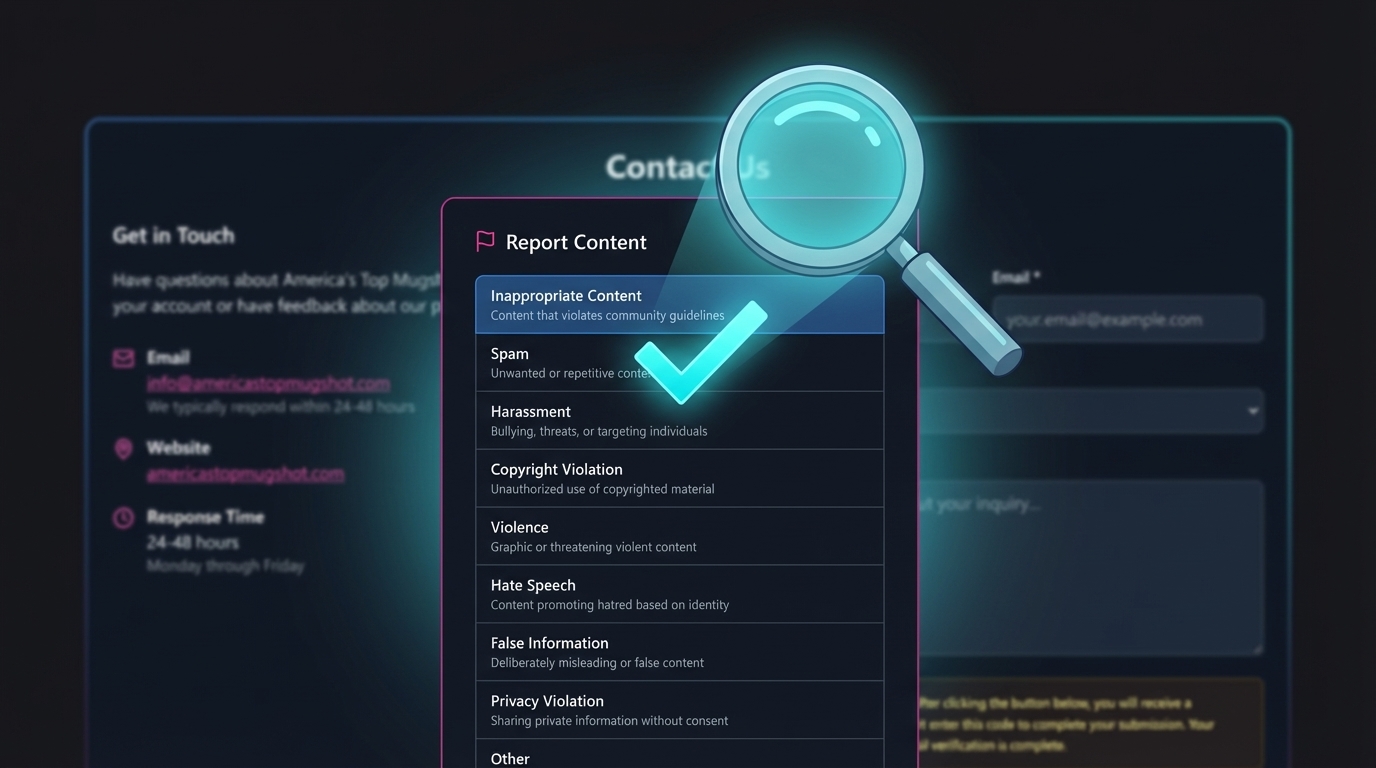

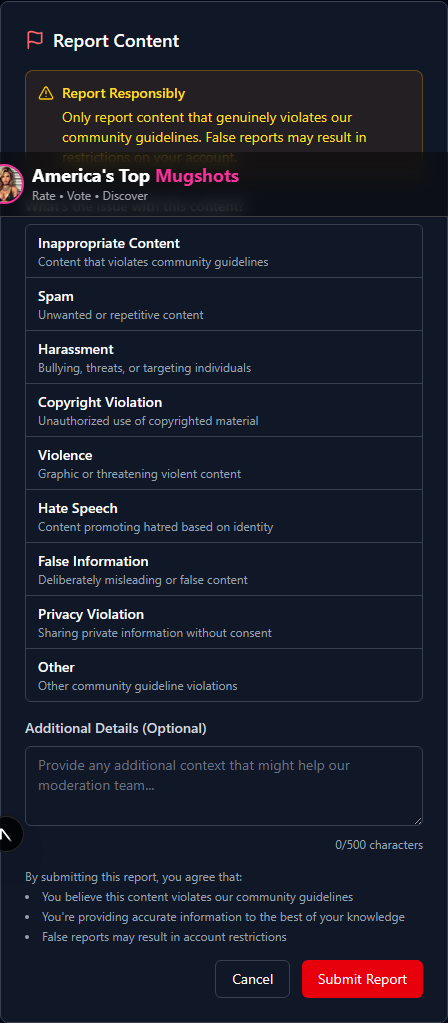

Community Reporting: How You Help Us Moderate

We can't review every piece of content in real time, and we don't pretend to. That's where our community comes in. Every mugshot listing has a Report button — a flag icon that opens a reporting dialog where you can flag content that violates our guidelines.

When you report something, you choose from nine specific categories:

- Inappropriate Content — Content that violates community guidelines

- Spam — Unwanted or repetitive content

- Harassment — Bullying, threats, or targeting individuals

- Copyright Violation — Unauthorized use of copyrighted material

- Violence — Graphic or threatening violent content

- Hate Speech — Content promoting hatred based on identity

- False Information — Deliberately misleading or incorrect content

- Privacy Violation — Sharing private information without consent

- Other — Any other guideline violation

You can also add a written description (up to 500 characters) with additional context. Once submitted, your report goes directly to our moderation team for review.

One important detail: each user can only report the same piece of content once. This prevents anyone from flooding our review queue with duplicate reports in an attempt to force a removal. If you've found inaccurate information rather than abusive content, see our guide on how to request a correction.

How Our Moderation Team Handles Reports

Reports don't result in automatic removal. Every report is reviewed by a real person on our moderation team before any action is taken. Here's what happens:

- Report received — Your submission is logged with a timestamp and assigned a priority level based on the category and content.

- Review — A moderator examines the reported content, your description, and any supporting context. They compare the content against our community guidelines and the original public records.

- Decision — The moderator takes one of three actions:

- Approved — The content is confirmed as compliant and the report is closed.

- Removed — The content is hidden from public view with a recorded reason.

- Dismissed — The report doesn't meet the threshold for action.

- Logged — Every decision is recorded with who made it, when, and why. This creates a complete audit trail that holds our own team accountable.

Content that is removed is hidden, not deleted. We preserve the original content in case a decision needs to be reversed or reviewed later. This protects both the person who was reported and the person who submitted the report.

Automated Detection: Catching Problems Before You See Them

Not all harmful content waits for a user to report it. We run automated checks that scan for obvious violations before content ever reaches the public.

These checks look for:

- Slurs and hate speech — Known harmful terms are flagged automatically.

- Threats and harassment language — Patterns associated with threatening behavior trigger a review.

- Spam patterns — Repetitive or promotional content is caught and queued for moderation.

- Personal information leaks — Phone numbers and email addresses that appear where they shouldn't are detected and flagged.

When the automated system flags something, it assigns a confidence level. High-confidence matches on serious violations (like threats) are quarantined immediately — removed from public view until a moderator can review them. Lower-confidence flags are placed in the review queue for human judgment.

We track how accurate these automated checks are over time. Rules that produce too many false positives — flagging content that turns out to be fine — get adjusted or disabled. The goal is to catch real problems without over-censoring legitimate content.

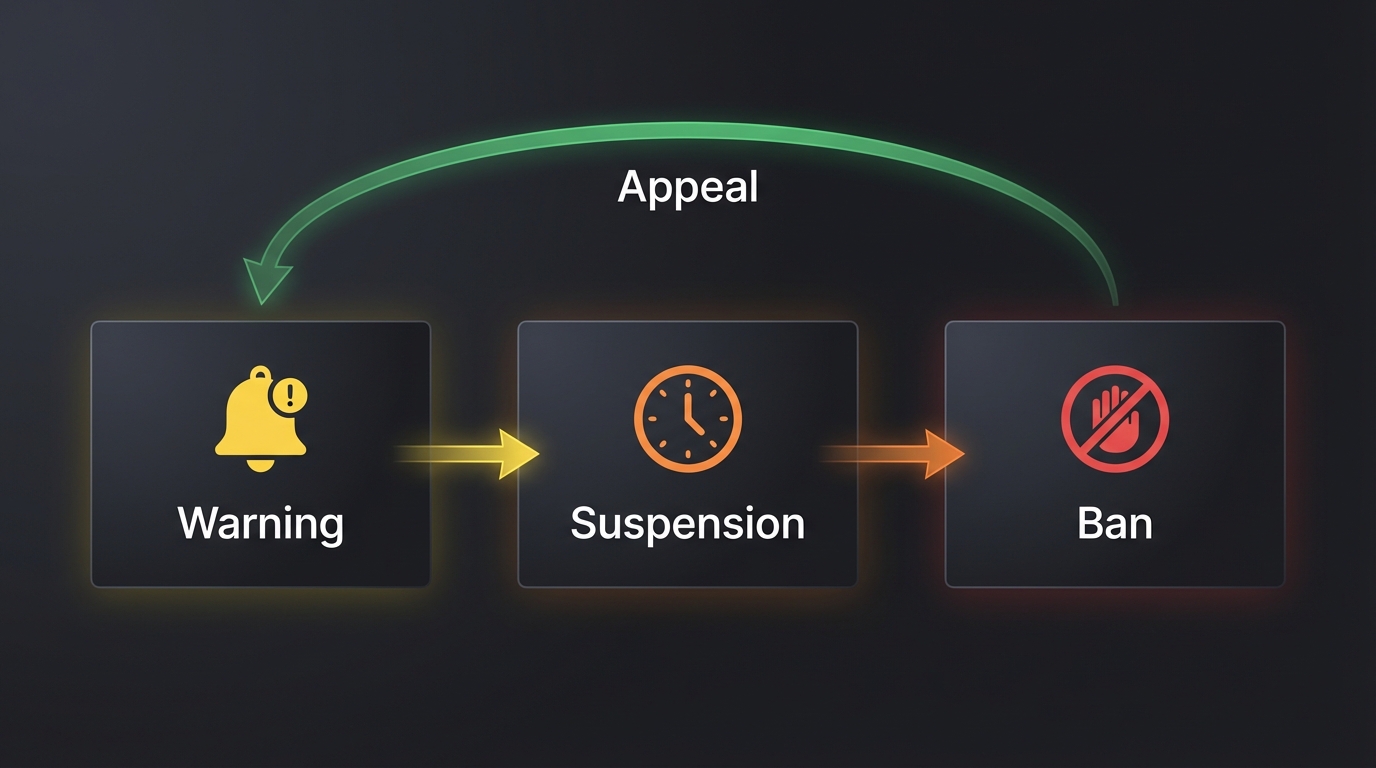

What Happens When Someone Breaks the Rules

Users who violate our community guidelines face consequences that are proportional to the offense. We don't jump straight to permanent bans for a first-time minor issue, but we also don't look the other way on serious violations.

The progression works like this:

- Warning — A first notification that specific behavior violated guidelines, with an explanation of what was wrong and what's expected going forward.

- Temporary Suspension — Repeated violations or more serious offenses result in a time-limited restriction on the account. The duration depends on the severity.

- Permanent Ban — Extreme or persistent violations result in permanent removal from the platform.

Every action against a user is documented with a written reason, and users have the right to appeal. If you believe a moderation action against your account was a mistake, you can submit an appeal that will be reviewed by a different team member than the one who made the original decision.

Accountability Goes Both Ways

We hold our users accountable — and we hold ourselves accountable too. Every moderation action taken on this platform is logged with a complete record: who made the decision, when it was made, what the content looked like before and after, and the reason for the action.

This means:

- No content is silently removed without a paper trail.

- No user is disciplined without a documented reason.

- No moderator can take action without it being recorded.

- Removed content is preserved (not deleted) so decisions can be reviewed or reversed.

This level of transparency isn't just good practice — it's a safeguard against our own team making mistakes. And when mistakes happen, the audit trail means we can identify them, fix them, and learn from them.

What We Don't Allow

To be direct about it: this platform is not a place to harass, bully, or defame anyone. The people featured here have public arrest records — that is a fact of public record. But having a public record does not make someone a target.

We do not allow:

- Coordinated campaigns to manipulate someone's rating

- Hate speech or discriminatory language of any kind

- Threats, harassment, or calls for violence

- Posting private information (phone numbers, addresses, social media accounts)

- False reports intended to harass or censor

- Spam, scams, or promotional content

Our broader philosophy on how we approach this responsibility is explained in our article on why we don't shame or humiliate anyone.

Why This Matters

Building a mugshot platform without defamation protections would be irresponsible. Public records serve an important function in a transparent society, but that transparency comes with an obligation to prevent misuse.

Every protection described in this article — the rating floor, the one-vote limit, the reporting system, the moderation queue, the automated detection, the graduated discipline, and the accountability logging — exists because we believe you can have transparency without cruelty. The two are not in conflict.

If you see something on our platform that shouldn't be there, use the Report button. If you have questions about our policies, reach out to us directly. We're here to get this right.